Key Takeaways

- The core economic advantage is not just cheap power, but the combination of stable, low-cost hydropower and natural cooling. This dual benefit drastically lowers a data center’s Total Cost of Ownership (TCO) by targeting its two largest operational expenses, a synergy impossible in the hot coastal plains where most Asian data centers are currently located.

- Nepal’s strategic niche is not in all AI workloads, but specifically in asynchronous compute. The focus should be on hosting AI inference and model training tasks where latency is secondary to raw computational cost, such as large-scale data analysis, scientific simulation, and media rendering—a massive and underserved segment of the market.

- The primary barrier to investment is not capital or technology, but policy ambiguity. Global investors require a dedicated ‘Digital Infrastructure Act’ that guarantees long-term Power Purchase Agreements (PPAs) for data centers at sub-US$0.05/kWh and de-risks investment in terrestrial fiber optic cables, as the current framework is designed for traditional industries, not high-density compute.

Introduction

The global technology landscape is silently being redrawn by a single, insatiable demand: energy. An NVIDIA H100 GPU, the workhorse of the AI revolution, consumes enough electricity under load to power a typical Nepali home for a day. An entire data center cluster, with tens ofthousands of these GPUs, can require more power than a small city. This has created a global GPU energy crisis, where the growth of artificial intelligence is no longer constrained by processing power, but by the physical limits of the electrical grid. In boardrooms from Silicon Valley to Shenzhen, the new strategic imperative is not just acquiring chips, but securing vast, stable, and preferably green, sources of power.

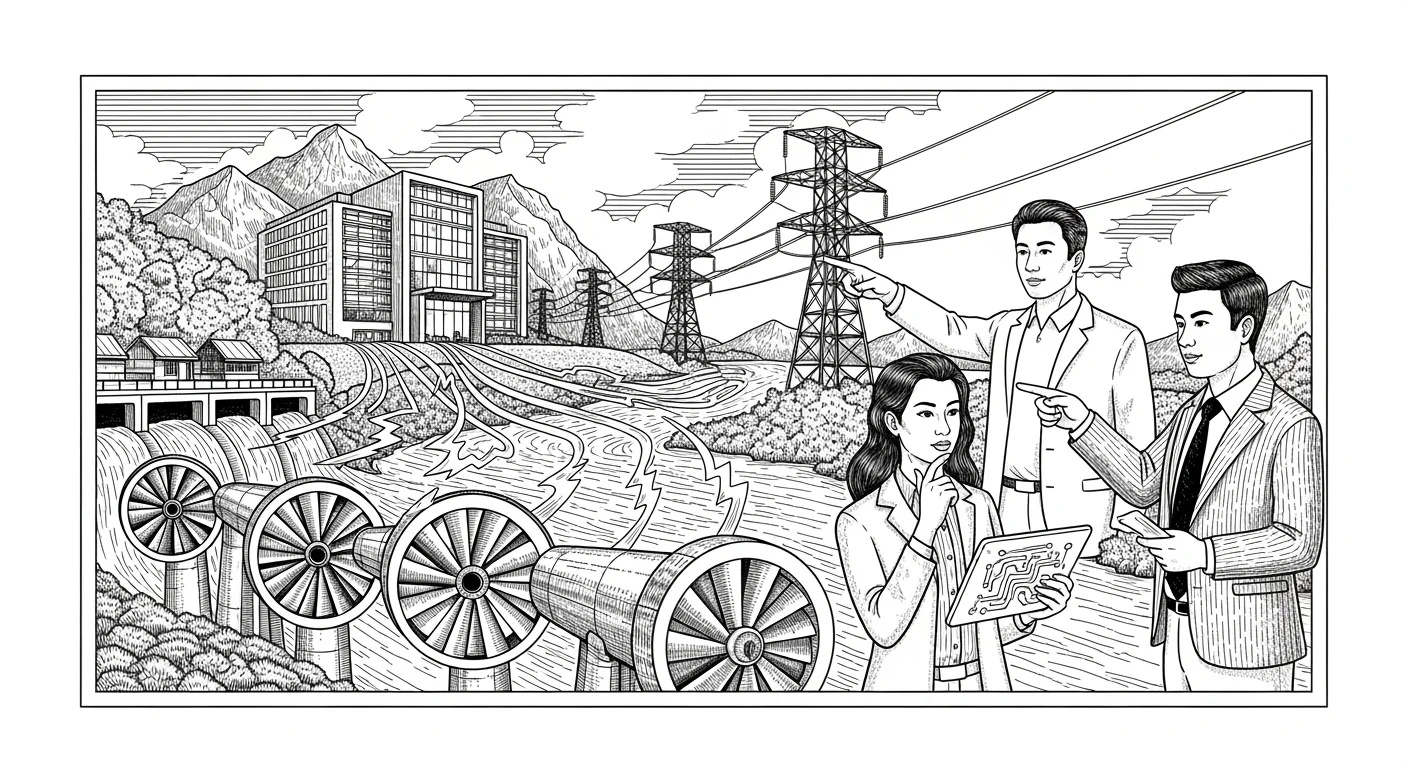

Herein lies Nepal’s generational opportunity. While our neighbors grapple with grid instability and carbon-intensive energy portfolios, Nepal is facing the paradox of surplus. During the wet season, we are forced to spill gigawatt-hours of clean, renewable hydropower—a national asset literally flowing away, unused. The critical question for Nepal’s economic future is this: can we connect the dots between the global compute famine and our domestic energy feast? Can we transform our hydropower surplus from a wasted resource into a high-value export, not as raw electricity, but as processed data?

This analysis will dissect the economics of establishing “Green Data Centers” in Nepal. We will move beyond the headlines to examine the crucial trade-off between latency challenges and the profound cost benefits of hosting AI inference clusters in cool, hydro-powered zones like Dolakha. We will argue that by positioning itself as the “Green Battery” for Indian and Chinese AI operations, Nepal can leapfrog traditional development paths and plug directly into the most valuable industry of the 21st century. This is not a distant dream; it is an actionable economic strategy resting on a foundation of solid rock and flowing water.

The New Oil: Why AI Runs on Kilowatt-Hours

To understand Nepal’s opportunity, one must first grasp the fundamental economics of modern AI infrastructure. The cost of running an AI model is dominated by two factors: the capital expenditure (CapEx) on GPUs and the operational expenditure (OpEx) on power and cooling. While chip costs are a global constant, energy costs are intensely local, creating a powerful incentive for what economists call ‘locational arbitrage’—moving a process to where its most expensive input is cheapest. For AI, that input is now undeniably the kilowatt-hour (kWh).

Major tech hubs are hitting a power wall. In Virginia’s “Data Center Alley,” the world’s largest concentration of data infrastructure, utility companies are now delaying new connections for years due to grid overload. Singapore has placed a moratorium on new data center construction, citing energy and land constraints. In India, businesses pay upwards of US$0.10-0.12/kWh for industrial power that is often subject to brownouts, necessitating costly diesel generator backups that negate any “green” credentials. This is precisely where Nepal’s structural advantage emerges. We are a nation built on hydropower, a source that is not only renewable but, critically for data centers, provides the high-quality, stable electrical frequency required by sensitive IT hardware. During the monsoon season, Nepal’s effective cost of surplus energy approaches zero. Even with transmission and profit margins, the Nepal Electricity Authority (NEA) could offer a Power Purchase Agreement (PPA) to a large, consistent consumer like a data center at a globally competitive rate of US$0.04-0.05/kWh, a price point that is simply unattainable for a greenfield project in Mumbai or Bengaluru.

This price differential is not a minor discount; it fundamentally changes the business case. A 50% reduction in power costs can translate to a 20-25% reduction in the Total Cost of Ownership (TCO) of a data center over a decade. When companies are deploying tens of thousands of GPUs, each costing upwards of $30,000, this level of savings moves from a rounding error to a core strategic consideration. Nepal is not merely offering ‘green’ power as a marketing tool for corporate social responsibility reports; it is offering a direct, sustained, and massive reduction in the primary operating cost of the world’s fastest-growing industry. We are not selling a virtue; we are selling a competitive advantage.

The Latency Equation: A Geographic Handicap or Hidden Advantage?

The most immediate objection to hosting data centers in Nepal is latency. Latency is the time delay in sending data from its origin to a destination and back, a delay governed by the speed of light and the quality of network infrastructure. A data center in Dolakha will inevitably have higher latency to users in Delhi or Shanghai than a data center located on the outskirts of those cities. For certain applications, this is a deal-breaker. High-frequency stock trading, real-time video collaboration, or online gaming, where every millisecond counts, cannot be hosted remotely. To attempt to compete for these workloads would be a strategic error.

However, the AI industry is not a monolith. It can be broadly divided into two workload categories: training and inference. Training is the computationally intense, one-off process of teaching an AI model on a massive dataset, which can take weeks or months. Inference is the subsequent, ongoing process of using the trained model to make predictions or generate content. The context for our discussion, “inference clusters,” is key. While some inference tasks are latency-sensitive (like a real-time chatbot), a vast and growing segment is not. These are asynchronous, batch-processing workloads. Consider a pharmaceutical company using AI to screen millions of molecular compounds for drug discovery, an animation studio rendering the frames for a feature film, or a financial institution running overnight risk analysis on its entire portfolio. For these tasks, whether the total computation takes four hours or four hours and three seconds is entirely irrelevant. The overwhelming priority is the cost per computation.

This is Nepal’s strategic entry point. We should not aim to be the ‘front-end’ server for a user-facing app. We should aim to be the ‘back-end’ computational engine for the heavy lifting. By focusing on batch-inference, model fine-tuning, and even certain types of model training, the latency “problem” becomes a manageable parameter rather than a fatal flaw. The calculus for an AI firm in Bangalore becomes simple: “Can I run this overnight batch job for 40% less by processing it in Nepal?” The answer is a resounding yes. Nepal’s value proposition is not to replace the data centers in Mumbai, but to complement them by off-loading their most power-hungry and least latency-sensitive workloads. We are not competing to be the closest; we are competing to be the most cost-effective and sustainable.

The Dolakha Blueprint: A Symphony of Hydropower and Himalayas

To move from theory to practice, consider the tangible case of a data center in a district like Dolakha, home to the 456 MW Upper Tamakoshi hydropower project. The “Dolakha Blueprint” is a model of economic symbiosis that leverages Nepal’s unique geography far beyond just access to a power plant. The second-largest expense for any data center, after power, is cooling. Servers generate immense heat, and keeping them at optimal operating temperatures requires a massive investment in industrial-scale HVAC (Heating, Ventilation, and Air Conditioning) systems, which themselves consume a significant amount of electricity.

The efficiency of a data center is measured by a metric called Power Usage Effectiveness (PUE). A PUE of 2.0 means that for every watt of power used by the IT equipment, another watt is used for cooling and other overhead. A PUE of 1.0 is the theoretical ideal. A typical data center in a hot, humid climate like Chennai or Guangzhou struggles to achieve a PUE below 1.6. This is where Dolakha’s climate, with an average annual temperature of around 16°C, becomes a game-changing economic asset. By utilizing ambient air cooling for much of the year, a data center there could achieve a PUE of 1.2 or even lower. This is not just a marginal improvement. Reducing PUE from 1.6 to 1.2 on a 100 MW data center could save over 350,000 MWh of electricity per year—the equivalent of tens of millions of dollars in direct operational costs.

This confluence of cheap power and free cooling is Nepal’s unique selling proposition. Bhutan has hydropower, but its transport and connectivity options are more limited. Northern India has cool climates but lacks Nepal’s level of surplus green energy. No other location in the region can offer this powerful combination. The Dolakha Blueprint imagines dedicated data center parks zoned adjacent to major hydropower corridors. These parks would be designated as Special Economic Zones (SEZs), offering streamlined permitting, tax incentives on imported hardware, and most importantly, a direct, high-voltage connection to the hydro-plant’s substation. This minimizes transmission loss and guarantees power quality. It transforms a simple hydropower project into a vertically integrated digital infrastructure hub, where raw electrons are converted into high-value processed data right at the source.

The Strategic Outlook

As Nepal stands at this economic crossroads, two distinct futures are possible. The first is the path of least resistance—the ‘Status Quo’ scenario. In this future, we continue to focus exclusively on exporting raw electricity to India and Bangladesh. While beneficial, this strategy positions Nepal as a simple commodity supplier, a price-taker subject to the political and economic whims of its larger neighbors. The value capture is minimal; we sell the raw material, and our neighbors capture the immense value of using that electricity to power their own digital economies. We become a battery pack for their industries, not a prime mover in our own.

The second, more ambitious path is the ‘Green Compute Gambit.’ In this scenario, Nepal makes a concerted, strategic pivot to attract high-density compute workloads. This involves proactive, targeted policy-making. It means creating a national ‘Digital Infrastructure Act’ that codifies the rights and protections for data center investors. It requires the NEA to design and offer a new class of PPA specifically for data centers—a 20-year, fixed-rate contract priced in US dollars to eliminate currency risk for foreign investors. It necessitates the government to actively facilitate and de-risk private sector investment in multiple, redundant terrestrial fiber optic routes, perhaps by providing rights-of-way along new highway and transmission line corridors. If we build this framework, AI companies in India and China, starved for power and under pressure to meet ESG (Environmental, Social, and Governance) mandates, will not see Nepal as a risk, but as a sanctuary. The capital will follow the compelling economics we offer.

However, we must conclude with a hard truth. The greatest obstacle to this vision is not technological or financial; it is institutional. The hard truth is that international fiber optic connectivity, not power, is Nepal’s current Achilles’ heel. While we have a surplus of electrons, we have a deficit of photons. Our current connectivity, reliant on a few points of entry through India, is neither resilient nor cheap enough to support the data-heavy demands of an AI cluster. Without significant, redundant, and privately-operated terrestrial fiber routes connecting to submarine cable landing stations through both India and China, the entire ‘Green Compute’ vision remains a theoretical exercise. Capital for multi-billion-dollar data centers will not flow until the data itself can flow with 99.999% reliability. The first and most critical investment, therefore, is not in servers, but in the glass arteries that will connect Nepal’s processing power to the global digital brain.